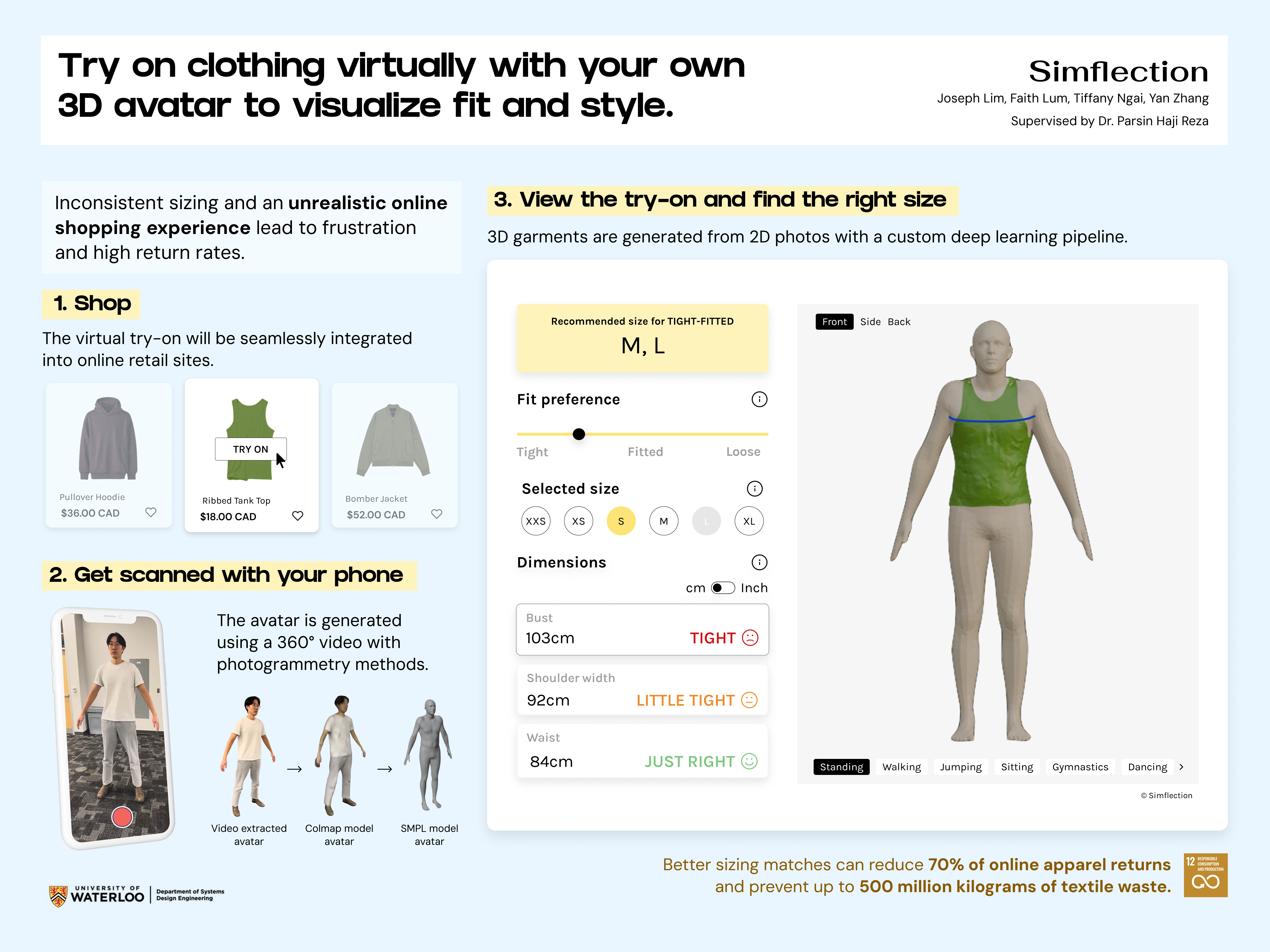

Try on clothing virtually with your own 3D avatar to visualize fit and style.

Simflection

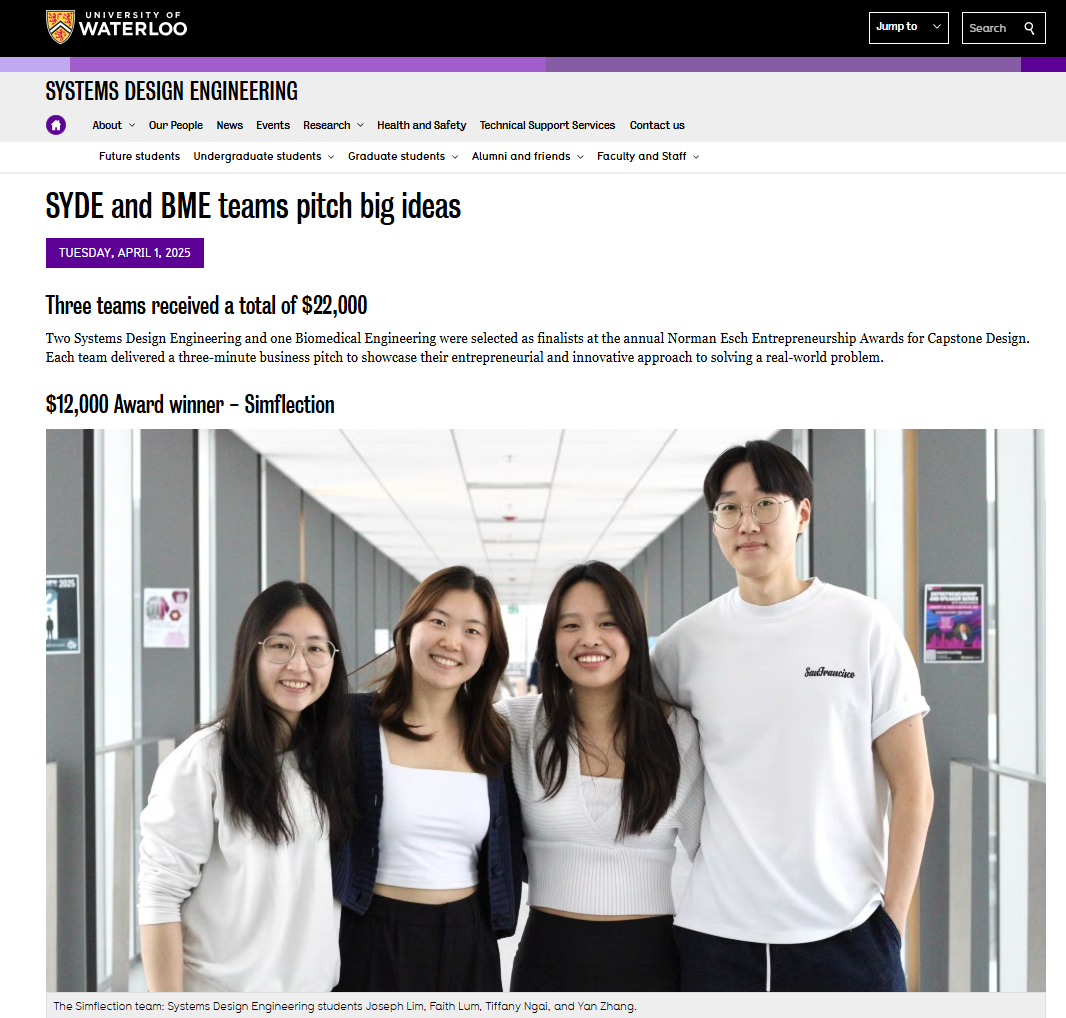

Joseph Lim · Faith Lum · Tiffany Ngai · Yan Zhang

Supervised by Dr. Parsin Haji Reza · University of Waterloo, Systems Design Engineering

demos

Shopify extension with virtual try-on

3D garments generated from 2D images

behind the build

Three technical components.

- 01

We generate a person's 3D model from a 360° video using photogrammetry, then post-process to extract measurements.

+detailshide

An AWS GPU pipeline (EC2 + Lambda, on-demand provisioning) processes the phone video through photogrammetry, COLMAP reconstruction, and SMPL fitting. We standardized CUDA + PyTorch environments across the team so we could fairly benchmark ~10 SOTA reconstruction methods before picking a hybrid worth defending.

- 02

To avoid individually scanning the store's garments, we developed a deep-learning pipeline that converts their existing product photos into 3D garments.

+detailshide

Garments are reconstructed from 2D photos via keypoints + an implicit representation. A 3D segmentation step then extracts a hollow, wearable garment mesh that can be fitted onto a 3D avatar.

- 03

We rig the avatar and simulate the try-on in real time, so customers see how the garment moves with them.

+detailshide

The avatar runs through standing, walking, jumping, sitting, gymnastics, and dancing animations. The garment is overlaid as a deformable cloth mesh that flexes with the body in real time.

my role

I started Simflection with Tiffany. We shaped the product vision together and tested the feasibility of the research components before splitting work across the team: I built the garment reconstruction pipeline (2D photos → 3D mesh) and contributed to the AWS GPU avatar pipeline, the full-stack Shopify extension, and product design.

memories